Enterprise Data Architecture: Building the Foundation for Intelligent Agent Ecosystems

Transforming traditional data systems into AI-ready infrastructure

As organizations embrace artificial intelligence, the underlying data architecture must evolve to support intelligent agents. I'll guide you through the essential components, strategies, and considerations for creating enterprise data systems that empower rather than constrain your AI initiatives.

Evolution of Enterprise Data Architecture

I've watched enterprise data architecture transform dramatically over the past decades. What began as simple data warehousing has evolved into complex ecosystems designed to support intelligent agents and AI-driven decision making.

The evolution of enterprise data architecture from siloed systems to integrated AI agent ecosystems

Traditional data management approaches were built for human consumption and analysis. They typically featured:

- Rigid schema designs optimized for specific reporting needs

- Batch processing with daily or weekly update cycles

- Departmental data silos with limited cross-functional visibility

- Manual data governance processes focused on compliance

Today's AI-ready infrastructure demands a fundamentally different approach:

flowchart TD

A[Traditional Data Architecture] --> B[Data Lakes & Warehouses]

B --> C[BI & Analytics Systems]

C --> D[AI-Ready Infrastructure]

D --> E[Intelligent Agent Ecosystems]

style A fill:#ffe0cc,stroke:#FF8000

style B fill:#ffe0cc,stroke:#FF8000

style C fill:#ffe0cc,stroke:#FF8000

style D fill:#FF8000,stroke:#FF8000,color:#fff

style E fill:#FF8000,stroke:#FF8000,color:#fff

The key challenges I see in current enterprise data systems that limit AI agent potential include:

- Fragmented data sources that prevent holistic analysis

- Inconsistent data quality standards across systems

- Lack of semantic context that AI agents need for understanding

- Insufficient real-time processing capabilities

- Security models not designed for autonomous agent access

As business intelligence AI continues to evolve, we're seeing a powerful convergence of traditional BI tools with AI-driven insights. This convergence is creating unprecedented opportunities for organizations that properly prepare their data foundations.

Core Components of AI-Ready Data Architecture

In my experience designing AI-ready data architectures, I've identified several essential components that form the foundation for successful intelligent agent integration:

Core components of an AI-ready enterprise data architecture

Flexible Data Schemas

AI agents require data schemas that can evolve as the agents learn and as business needs change. I recommend implementing:

- Schema-on-read approaches that allow for data interpretation at query time

- Document databases for unstructured content that agents need to process

- Graph data models that capture relationships between entities

- Hybrid persistence strategies that combine structured and unstructured data

Data Governance for AI

Traditional data governance must evolve to accommodate AI agents while maintaining security and compliance:

Metadata Management

Metadata becomes even more critical in AI-ready architectures. I find these strategies essential:

- Business glossaries that define terms consistently across systems

- Automated metadata tagging using NLP and machine learning

- Lineage tracking to understand data origins and transformations

- Contextual metadata that explains relationships between data elements

Agent-to-Data Connection Mapping

Creating robust systems for agent-to-data connection mapping is crucial for effective AI integration. These systems must:

flowchart LR

Agent[AI Agent] --> Auth[Authentication Layer]

Auth --> Access[Access Control]

Access --> Map[Connection Mapping Service]

Map --> D1[Data Source 1]

Map --> D2[Data Source 2]

Map --> D3[Data Source 3]

style Agent fill:#FF8000,stroke:#FF8000,color:#fff

style Map fill:#FF8000,stroke:#FF8000,color:#fff

Using PageOn.ai's AI Blocks, I can transform these complex data relationships into intuitive visual maps that help stakeholders understand how agents interact with enterprise data. This visualization capability bridges the technical and business understanding, making it easier to identify optimization opportunities.

Breaking Down Data Silos for Agent Integration

In my work with enterprise clients, I consistently find that data silos are the primary obstacle to effective AI agent integration. These isolated pockets of information prevent agents from accessing the complete context they need to deliver maximum value.

Breaking down organizational data silos to enable AI agent integration

Identifying Isolation Points

I recommend beginning with a comprehensive audit to identify legacy system isolation points:

- Departmental systems with no external APIs

- Custom applications with proprietary data formats

- Shadow IT systems outside central governance

- Acquisitions that were never fully integrated

API-First Approaches

Implementing API-first strategies creates the connective tissue that AI agents need:

flowchart TD

subgraph "Legacy Systems"

L1[ERP]

L2[CRM]

L3[HCM]

end

subgraph "API Layer"

A1[API Gateway]

A2[Authentication]

A3[Rate Limiting]

end

subgraph "Agent Layer"

AG1[AI Agent 1]

AG2[AI Agent 2]

AG3[AI Agent 3]

end

L1 --> A1

L2 --> A1

L3 --> A1

A1 --> A2

A2 --> A3

A3 --> AG1

A3 --> AG2

A3 --> AG3

Unified Data Lakes and Knowledge Graphs

Creating unified data repositories provides AI agents with comprehensive access:

- Data lakes that store raw, unprocessed data at scale

- Knowledge graphs that capture relationships between entities

- Semantic layers that add meaning and context

- Master data management to ensure consistency

I've found PageOn.ai's Deep Search functionality particularly valuable in connecting previously disconnected data sources. It allows AI agents to discover relationships and patterns across organizational boundaries that would otherwise remain hidden.

Cross-Departmental Data Harmonization

Technical solutions alone aren't enough. Successful silo breaking requires organizational strategies:

Knowledge Architecture as the Backbone of AI Systems

While data architecture focuses on storage and access, enterprise knowledge architecture addresses the meaning and context of information. This semantic layer is what enables AI agents to truly understand your organization's information.

Knowledge graph architecture supporting AI agent understanding

Taxonomies and Ontologies

Creating a common language for humans and AI is foundational to effective agent integration:

flowchart TD

Taxonomy[Enterprise Taxonomy] --> Domain1[Finance Domain]

Taxonomy --> Domain2[HR Domain]

Taxonomy --> Domain3[Operations Domain]

Domain1 --> Concept1[Revenue Concepts]

Domain1 --> Concept2[Cost Concepts]

Domain2 --> Concept3[Employee Concepts]

Domain2 --> Concept4[Compensation Concepts]

Domain3 --> Concept5[Process Concepts]

Domain3 --> Concept6[Resource Concepts]

Concept1 -.-> Relation1{Relates to}

Concept2 -.-> Relation1

Relation1 -.-> Ontology[Enterprise Ontology]

style Taxonomy fill:#FF8000,stroke:#FF8000,color:#fff

style Ontology fill:#FF8000,stroke:#FF8000,color:#fff

Knowledge Graph Implementation

I've found these best practices essential for knowledge graph implementation:

- Start with high-value use cases rather than attempting to model everything

- Incorporate both structured data and unstructured content

- Use machine learning to continuously enhance and expand the graph

- Implement versioning to track how knowledge evolves over time

- Create feedback mechanisms for agents to improve the knowledge base

Semantic Layer Development

The semantic layer translates raw data into meaningful concepts:

| Semantic Layer Component | Purpose | AI Agent Benefit |

|---|---|---|

| Business Glossary | Define standard business terms | Consistent understanding of terminology |

| Concept Maps | Show relationships between ideas | Contextual reasoning capabilities |

| Inference Rules | Define logical relationships | Enable automated reasoning |

| Natural Language Processing | Extract meaning from text | Process unstructured documents |

Using PageOn.ai's Vibe Creation tools, I can translate these complex knowledge structures into accessible visualizations that help stakeholders understand how AI agents interpret and use organizational knowledge. This visual approach significantly accelerates adoption and trust in AI systems.

Technical Infrastructure Requirements

The underlying technical infrastructure must evolve to support the computational demands of AI agents. In my experience, these requirements differ significantly from traditional data systems.

Technical infrastructure supporting AI agent ecosystems

Scalable Computing Resources

AI agent training and deployment require significant computational power:

- GPU clusters for model training and fine-tuning

- Elastic compute resources that scale with demand

- Specialized AI accelerators for specific workloads

- High-performance storage optimized for machine learning operations

Real-time Processing Capabilities

Responsive agent behavior depends on real-time data processing:

Edge Computing Considerations

Distributed AI processing at the edge offers several advantages:

- Reduced latency for time-sensitive applications

- Decreased bandwidth requirements for central systems

- Enhanced privacy by processing sensitive data locally

- Improved resilience through distributed processing

Cloud Architecture Patterns

Effective cloud architectures for AI agent ecosystems typically include:

flowchart TD

User[User Interface] --> API[API Gateway]

API --> Auth[Authentication]

Auth --> Agents[Agent Orchestration]

Agents --> Services[Microservices]

Services --> Data[Data Services]

Data --> Storage[(Storage Layer)]

Agents --> ML[ML Services]

ML --> Models[(Model Repository)]

style Agents fill:#FF8000,stroke:#FF8000,color:#fff

style ML fill:#FF8000,stroke:#FF8000,color:#fff

Using PageOn.ai's AI Blocks, I create visual infrastructure models that help technical teams plan capacity, identify potential bottlenecks, and ensure the architecture can support agent requirements. These visualizations have proven invaluable for aligning technical and business stakeholders around infrastructure investments.

Data Quality and Preparation for AI Consumption

In my experience implementing AI systems, data quality is often the determining factor in agent performance. AI agents have unique data quality requirements that go beyond traditional metrics.

Data preparation pipeline for AI agent consumption

AI-Specific Data Quality Metrics

I recommend establishing these specialized metrics for AI-ready data:

Automated Data Preparation Pipelines

Efficient data pipelines are essential for maintaining high-quality AI training data:

- Automated data profiling to identify quality issues

- Standardization processes for consistent formatting

- Deduplication workflows that preserve relationship integrity

- Anomaly detection to flag potential data problems

- Data enrichment to add contextual information

Feature Engineering for Optimal Agent Performance

Feature engineering remains critical even with advanced AI models:

flowchart LR

Raw[Raw Data] --> Clean[Data Cleaning]

Clean --> Extract[Feature Extraction]

Extract --> Select[Feature Selection]

Select --> Transform[Feature Transformation]

Transform --> Validate[Validation]

Validate --> Agent[AI Agent Consumption]

style Extract fill:#FF8000,stroke:#FF8000,color:#fff

style Transform fill:#FF8000,stroke:#FF8000,color:#fff

style Agent fill:#FF8000,stroke:#FF8000,color:#fff

Data Versioning and Lineage

Tracking how data evolves is essential for reproducible AI results:

- Version control for datasets used in training

- Complete lineage tracking from source to consumption

- Snapshot capabilities for point-in-time analysis

- Rollback mechanisms when quality issues are discovered

Security and Compliance Considerations

AI agents introduce new security challenges that traditional data protection approaches don't fully address. I've developed comprehensive frameworks to ensure both security and compliance.

Security framework for AI agent ecosystems

Zero-Trust Architecture

Zero-trust principles are particularly important for AI agent security:

- Continuous verification of agent identity and permissions

- Least privilege access for all AI components

- Micro-segmentation to limit potential breach impact

- End-to-end encryption for all agent communications

- Comprehensive monitoring and threat detection

Data Privacy Frameworks

Privacy protection must be built into AI data architectures:

flowchart TD

Data[Data Source] --> Class[Data Classification]

Class --> PII{Contains PII?}

PII -->|Yes| Anon[Anonymization]

PII -->|No| Direct[Direct Processing]

Anon --> Access[Access Controls]

Direct --> Access

Access --> Agent[AI Agent]

style Class fill:#FF8000,stroke:#FF8000,color:#fff

style Anon fill:#FF8000,stroke:#FF8000,color:#fff

Audit Trails and Explainability

Transparent AI operations are essential for compliance:

- Comprehensive logging of all agent actions and decisions

- Explainable AI approaches that document reasoning

- Version control for models and training data

- Automated compliance reporting capabilities

Regulatory Compliance

AI systems must comply with an evolving regulatory landscape:

Using PageOn.ai's visualization tools, I create comprehensive security models that help identify potential vulnerabilities in AI systems. These visual representations make complex security concepts accessible to both technical and non-technical stakeholders, improving overall security posture.

Implementing a Strategic AI Transformation Roadmap

Transforming an organization's data architecture for AI integration requires a structured approach. I've developed a comprehensive methodology for creating and implementing company AI transformation roadmaps.

Strategic AI transformation roadmap with implementation phases

Assessing Organizational Readiness

I begin every transformation with a thorough readiness assessment:

Phased Implementation Approach

A staged approach to AI transformation delivers value while managing risk:

flowchart LR

P1[Phase 1: Foundation] --> P2[Phase 2: Initial Capabilities]

P2 --> P3[Phase 3: Advanced Integration]

P3 --> P4[Phase 4: Ecosystem Development]

subgraph "Phase 1"

F1[Data Quality]

F2[Governance]

F3[Infrastructure]

end

subgraph "Phase 2"

C1[Pilot Agents]

C2[API Development]

C3[Skills Building]

end

subgraph "Phase 3"

A1[Enterprise Agents]

A2[Agent Orchestration]

A3[Process Integration]

end

subgraph "Phase 4"

E1[Agent Marketplace]

E2[Cross-Org Integration]

E3[Autonomous Systems]

end

style P1 fill:#FF8000,stroke:#FF8000,color:#fff

style P2 fill:#FF8000,stroke:#FF8000,color:#fff

style P3 fill:#FF8000,stroke:#FF8000,color:#fff

style P4 fill:#FF8000,stroke:#FF8000,color:#fff

Change Management Strategies

Technical transformation must be accompanied by organizational change:

- Executive sponsorship and visible leadership

- Clear communication of vision and benefits

- Skills development for affected teams

- Process redesign to incorporate AI capabilities

- Celebration of early wins to build momentum

Skills Development

New competencies are required for teams working with AI agents:

| Role | Traditional Skills | AI-Ready Skills |

|---|---|---|

| Data Architect | Schema design, ETL, warehousing | Knowledge graphs, vector databases, feature stores |

| Data Engineer | Batch processing, data pipelines | Streaming analytics, ML pipelines, agent interfaces |

| Data Analyst | SQL, reporting, dashboards | Prompt engineering, agent supervision, AI outputs analysis |

| Business User | Report consumption, basic analysis | Agent collaboration, prompt creation, feedback loops |

Using PageOn.ai's Agentic capabilities, I create compelling visual transformation roadmaps that help stakeholders understand the journey ahead. These visualizations make abstract concepts concrete and provide clear milestones for measuring progress.

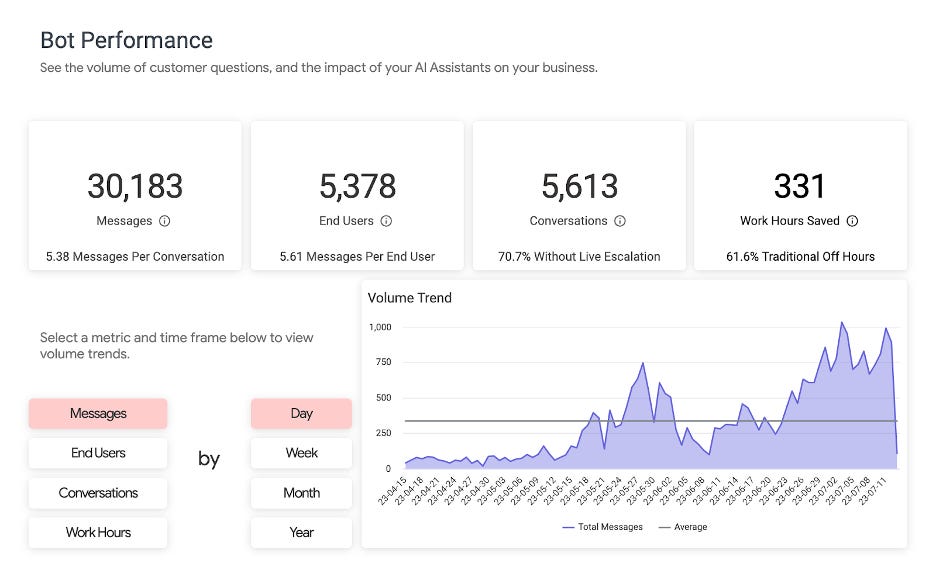

Measuring Success and ROI

Demonstrating the value of AI-ready data architecture investments requires thoughtful measurement approaches. I've developed frameworks that connect technical improvements to business outcomes.

Performance dashboard for tracking AI architecture ROI

Key Performance Indicators

Effective KPIs for AI-ready data architecture include:

- Data accessibility metrics (time to access, query performance)

- Agent performance indicators (accuracy, response time)

- Integration efficiency (API response times, successful connections)

- Knowledge quality measures (coverage, accuracy, freshness)

- Security and compliance metrics (vulnerabilities, audit findings)

Benchmarking Agent Performance

Comparing AI agents to traditional systems provides context for improvements:

Business Intelligence AI Metrics

Advanced business intelligence AI metrics demonstrate value creation:

flowchart TD

Data[Data Assets] --> Value[Value Creation]

subgraph "Traditional Metrics"

M1[Report Usage]

M2[Query Volume]

M3[Dashboard Views]

end

subgraph "AI-Enhanced Metrics"

A1[Insight Generation Rate]

A2[Decision Acceleration]

A3[Knowledge Discovery]

A4[Predictive Accuracy]

end

M1 --> Value

M2 --> Value

M3 --> Value

A1 --> Value

A2 --> Value

A3 --> Value

A4 --> Value

style A1 fill:#FF8000,stroke:#FF8000,color:#fff

style A2 fill:#FF8000,stroke:#FF8000,color:#fff

style A3 fill:#FF8000,stroke:#FF8000,color:#fff

style A4 fill:#FF8000,stroke:#FF8000,color:#fff

Using PageOn.ai's visualization capabilities, I transform complex ROI data into clear, compelling visuals that communicate value to stakeholders at all levels. These visualizations make it easier to secure continued investment in AI-ready data architecture initiatives.

Future-Proofing: The Intelligent Agents Industry Ecosystem

As the intelligent agents industry ecosystem evolves, data architectures must anticipate future requirements. I focus on building flexible foundations that can adapt to emerging technologies and use cases.

Future intelligent agent ecosystem with emerging technologies

Emerging Standards

Several standards are emerging for agent-ready data systems:

- LangChain and similar frameworks for agent orchestration

- Vector database interfaces for semantic search

- Knowledge graph exchange formats

- Agent communication protocols

- Explainability standards for regulatory compliance

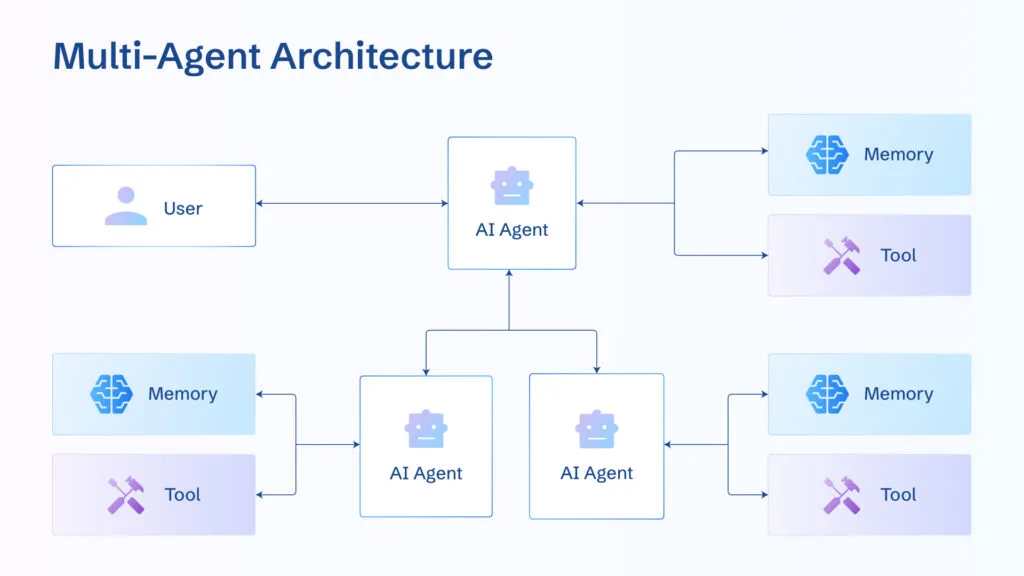

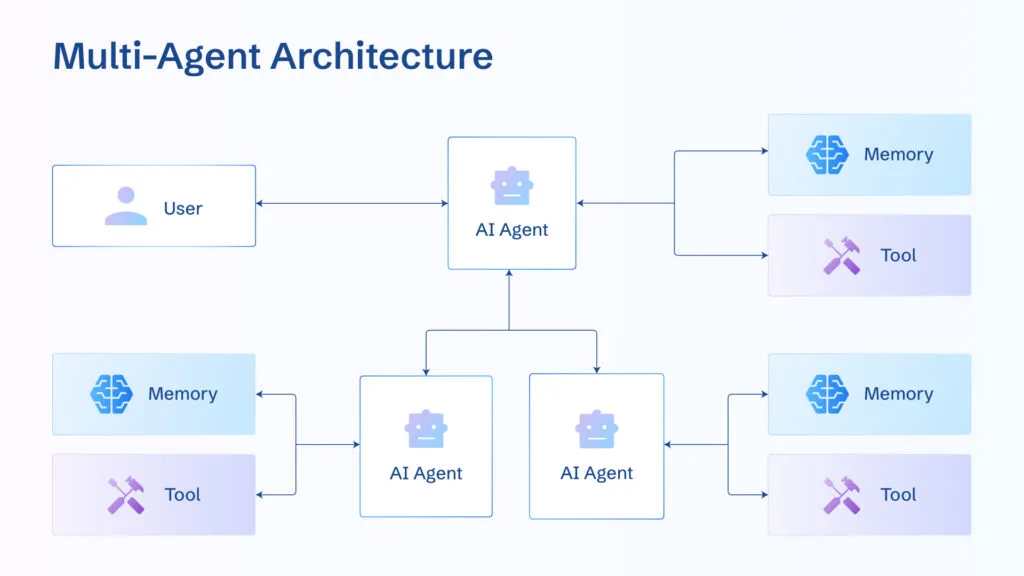

Multi-Agent Collaboration

Future architectures must support complex multi-agent scenarios:

flowchart TD

User[User] --> Orchestrator[Agent Orchestrator]

Orchestrator --> Agent1[Research Agent]

Orchestrator --> Agent2[Analysis Agent]

Orchestrator --> Agent3[Creative Agent]

Orchestrator --> Agent4[Critic Agent]

Agent1 --> Data1[(Knowledge Base)]

Agent2 --> Data2[(Analytical Data)]

Agent3 --> Data3[(Creative Assets)]

Agent4 --> Data4[(Evaluation Criteria)]

Agent1 --> Collab{Collaboration Layer}

Agent2 --> Collab

Agent3 --> Collab

Agent4 --> Collab

Collab --> Output[Final Output]

Output --> User

style Orchestrator fill:#FF8000,stroke:#FF8000,color:#fff

style Collab fill:#FF8000,stroke:#FF8000,color:#fff

Continuous Learning Infrastructure

Supporting evolving agent capabilities requires specialized infrastructure:

Next-Generation AI Requirements

Future data architectures should anticipate these emerging capabilities:

- Multimodal data processing (text, images, audio, video)

- Causal reasoning and inference capabilities

- Federated learning across organizational boundaries

- Quantum computing integration for specialized workloads

- Autonomous architecture optimization by AI systems

Using PageOn.ai to model future state architectures and transition paths helps organizations visualize their journey toward AI-ready data systems. These visual models create alignment around long-term architectural vision while identifying practical near-term steps.

Transform Your Enterprise Data Architecture with PageOn.ai

Ready to prepare your systems for AI agent integration? PageOn.ai provides powerful visualization tools that help you design, communicate, and implement your AI-ready data architecture with clarity and precision.

Start Creating with PageOn.ai TodayBuilding Your AI-Ready Future

Throughout this guide, I've shared my approach to transforming enterprise data architecture for AI agent integration. The journey requires thoughtful planning, technical expertise, and organizational alignment.

As you embark on your own transformation, remember that visualization tools like PageOn.ai can dramatically accelerate understanding and alignment. The ability to visually express complex architectural concepts, data flows, and transformation roadmaps creates shared understanding across technical and business stakeholders.

By focusing on flexible data schemas, breaking down silos, building robust knowledge architecture, and implementing proper security controls, you'll create a foundation that not only supports today's AI capabilities but can evolve with emerging technologies.

The organizations that succeed in the age of intelligent agents will be those that thoughtfully prepare their data foundations. With the right architecture in place, AI agents become powerful partners in extracting value from enterprise data.

You Might Also Like

The Art of Text Contrast: Transform Audience Engagement With Visual Hierarchy

Discover how strategic text contrast can guide audience attention, enhance information retention, and create more engaging content across presentations, videos, and marketing materials.

Beyond Bullet Points: Transform Your Text with Animated Visuals | PageOn.ai

Discover how to transform static bullet points into dynamic animated visuals that boost engagement by 40%. Learn animation fundamentals, techniques, and AI-powered solutions from PageOn.ai.

Transform Your Presentations: Mastering Slide Enhancements for Maximum Impact

Learn how to elevate your presentations with effective slide enhancements, formatting techniques, and visual communication strategies that captivate audiences and deliver powerful messages.

Revolutionizing Market Entry Presentations with ChatGPT and Gamma - Strategic Impact Guide

Learn how to leverage ChatGPT and Gamma to create compelling market entry presentations in under 90 minutes. Discover advanced prompting techniques and visual strategies for impactful pitches.