Transforming Conversational Data into Visual Insights: NLP Applications for Modern Analysis

Unlocking the power of NLP to extract meaning from human conversations

In today's digital age, I've witnessed a remarkable transformation in how we analyze conversations. With the explosion of digital communication channels, we're now generating unprecedented volumes of conversational data across customer service interactions, social media exchanges, healthcare consultations, and business meetings. This wealth of information contains invaluable insights, but extracting meaningful patterns from unstructured text has historically been a significant challenge.

As communication increasingly moves to digital channels, organizations that can effectively analyze customer conversations gain a competitive advantage. Throughout this guide, I'll explore how Natural Language Processing (NLP) has revolutionized our ability to transform these conversations into visual insights that drive strategic decision-making.

The Evolution of Conversational Data Analysis

When I look back at how we've analyzed conversations over time, I'm struck by the dramatic evolution. In the early days, we relied heavily on manual review processes, which were time-consuming and limited in scale. Organizations could only analyze a tiny fraction of their conversational data, often missing critical patterns and insights.

The first wave of automation brought basic keyword analysis and rudimentary sentiment detection, but these approaches often missed context and nuance. A customer saying "This product is bad!" is clearly negative, but what about "This product is sick!" which could be positive in certain contexts? Early systems struggled with these distinctions.

Evolution of Conversation Analysis Capabilities

The transformative breakthrough came with advanced NLP technologies powered by machine learning and deep learning. These approaches have fundamentally changed how we process human language, enabling:

- Contextual understanding that captures the true meaning behind words

- Scale that allows analysis of millions of conversations

- Nuance detection that recognizes subtle emotional signals and implied meanings

- Cross-channel analysis that integrates insights from multiple communication platforms

Today, I'm seeing organizations leverage sophisticated AI discussion response generators and analysis tools that can process vast volumes of conversational data, extract meaningful patterns, and present insights in visually compelling formats. This evolution has transformed conversational data from an untapped resource into a strategic asset.

Core NLP Technologies Powering Conversational Analysis

In my work with conversational data, I've found that several key NLP technologies form the foundation of effective analysis. Each serves a distinct purpose in extracting meaning from human language.

Core NLP Components for Conversation Analysis

The interconnected technologies that power modern conversational analysis:

flowchart TD

A[Raw Conversation Data] --> B[Preprocessing]

B --> C[Natural Language Understanding]

B --> D[Sentiment Analysis]

B --> E[Entity Extraction]

B --> F[Topic Modeling]

B --> G[Summarization]

C --> H[Intent Recognition]

D --> I[Emotion Detection]

E --> J[Named Entity Recognition]

F --> K[Theme Categorization]

G --> L[Key Point Extraction]

H & I & J & K & L --> M[Integrated Insights]

M --> N[Visual Representation]

style A fill:#FF8000,stroke:#FF8000,color:white

style M fill:#E74C3C,stroke:#E74C3C,color:white

style N fill:#66BB6A,stroke:#66BB6A,color:white

Natural Language Understanding (NLU)

At the heart of conversation analysis is NLU—the technology that helps machines comprehend human language in context. Modern NLU frameworks can identify a user's intent even when expressed in different ways. For instance, "How do I reset my password?" and "I can't log in" might both be classified under a "password help" intent despite using different phrasing.

Sentiment Analysis

I've found sentiment analysis particularly valuable for understanding emotional context in conversations. Advanced algorithms can now detect not just positive, negative, or neutral sentiments, but also more nuanced emotions like frustration, confusion, satisfaction, or urgency. This emotional layer adds critical context to conversation analysis.

Sentiment Distribution in Customer Support Conversations

Entity Extraction

Entity extraction identifies specific elements within conversations—product names, dates, locations, problem types, and more. This capability allows for precise categorization and filtering of conversation data. When analyzing customer support conversations, entity extraction can automatically tag product mentions, enabling product-specific analysis without manual coding.

Topic Modeling

I've leveraged topic modeling to automatically discover themes within large conversation datasets. Rather than predefined categories, these algorithms identify natural clusters of related terms that represent distinct topics. This approach has helped me uncover unexpected patterns and themes that might have been missed with manual categorization.

Summarization Technologies

Extractive and abstractive summarization technologies have transformed how we distill lengthy conversations. Extractive methods identify and pull out key sentences, while abstractive approaches generate new, concise text that captures the essence of the conversation. These technologies are particularly valuable for conversational ai presentation creation, allowing us to transform hours of discussion into clear, actionable insights.

Practical Applications Across Industries

In my experience, conversational data analysis powered by NLP has found meaningful applications across diverse industries. Each sector leverages these technologies in unique ways to address specific challenges.

Customer Service

Automated analysis of support conversations to identify common issues, measure agent performance, and detect opportunities for self-service options.

Healthcare

Analysis of patient-doctor conversations to improve care quality, ensure comprehensive symptom discussion, and identify potential diagnosis oversights.

Education

Evaluation of classroom discussions to measure student engagement, identify knowledge gaps, and assess teaching effectiveness across different topics.

Business Intelligence

Transformation of meeting transcripts into strategic insights, action items, and decision records without manual note-taking and synthesis.

Customer Conversation Intelligence

I've found customer conversation intelligence to be one of the most impactful applications of NLP. By analyzing support interactions, sales calls, and feedback channels, organizations can unlock insights that drive meaningful improvements.

Customer Journey Visualization from Conversation Data

How conversation analysis maps to customer experience stages:

flowchart LR

A[Awareness] -->|Product Questions| B[Consideration]

B -->|Technical Inquiries| C[Purchase]

C -->|Setup Issues| D[Onboarding]

D -->|Usage Questions| E[Adoption]

E -->|Feature Requests| F[Expansion]

subgraph "Conversation Types"

G[Pre-Sales Chat]

H[Support Tickets]

I[Training Calls]

J[Feedback Surveys]

end

G -.-> A & B

H -.-> C & D & E

I -.-> D & E

J -.-> E & F

style A fill:#FF8000,stroke:#FF8000,color:white

style F fill:#66BB6A,stroke:#66BB6A,color:white

Using advanced NLP, I can convert customer support transcripts into visual journey maps that highlight common paths, friction points, and moments of delight. These visualizations make it easy to identify where customers struggle and where they find value.

Common Issues in Customer Support Conversations

One particularly valuable application is using AI tools for comment analysis to transform qualitative feedback into quantitative metrics. This approach allows organizations to track changes in customer sentiment, issue prevalence, and resolution effectiveness over time, creating a data-driven approach to customer experience improvement.

From Raw Conversations to Visual Narratives

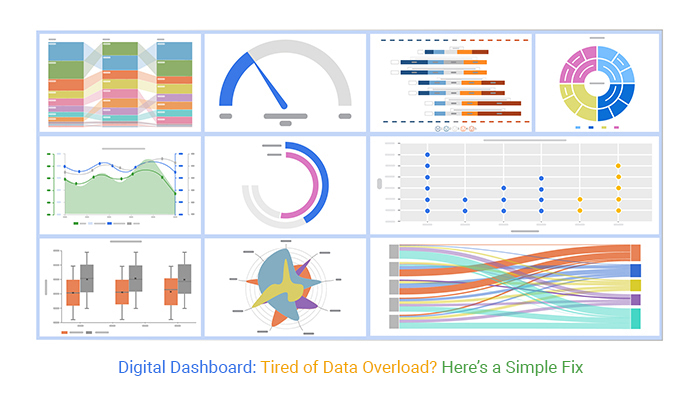

In my work with conversational data, I've found that visualization is the key to making complex insights accessible and actionable. Raw conversation data can be overwhelming, but effective visualization techniques transform this complexity into clear, compelling visual narratives.

Conversation Flow Visualization

Visualizing the structure and flow of conversations helps identify patterns in how discussions evolve. I often use node-link diagrams where each node represents a message or topic, and links show the conversation flow. These visualizations reveal common paths, diversions, and loops in conversation patterns.

Conversation Flow Patterns

Common structures in customer support conversations:

flowchart TD

A[Initial Query] --> B{Issue Type}

B -->|Technical| C[Troubleshooting]

B -->|Billing| D[Account Verification]

B -->|Feature| E[Feature Explanation]

C --> F{Resolution?}

D --> G{Verification Success?}

E --> H{Understanding Achieved?}

F -->|Yes| I[Problem Solved]

F -->|No| J[Escalation]

G -->|Yes| K[Billing Action]

G -->|No| L[Identity Verification]

H -->|Yes| M[Feature Adoption]

H -->|No| N[Alternative Approach]

J --> O[Specialist Input]

L --> G

N --> P[Use Case Examples]

style A fill:#FF8000,stroke:#FF8000,color:white

style I fill:#66BB6A,stroke:#66BB6A,color:white

style K fill:#66BB6A,stroke:#66BB6A,color:white

style M fill:#66BB6A,stroke:#66BB6A,color:white

Sentiment Timeline Visualization

Tracking sentiment throughout a conversation reveals emotional arcs and turning points. I've found that line charts or heatmaps that display sentiment over time highlight critical moments where customer emotions shift—both positively and negatively.

Sentiment Evolution During Support Conversations

Topic Distribution Visualization

When analyzing large conversation datasets, visualizing topic distribution helps identify dominant themes and their relationships. I often use treemaps, sunburst diagrams, or packed bubble charts to show hierarchical topic structures and their relative prevalence.

PageOn.ai's AI Blocks feature has been particularly valuable in this context. By transforming conversation transcripts into structured visual hierarchies, AI Blocks makes it easy to see how different topics relate to each other and where the conversation emphasis lies. This structured approach brings clarity to even the most complex conversation datasets.

Key Moment Highlighting

Not all moments in a conversation are equally important. I've developed techniques to identify and visually highlight critical turning points—moments where sentiment shifts dramatically, new topics emerge, or resolution pathways become clear. These visual cues draw attention to the most significant parts of the conversation, making analysis more efficient.

For presentations that communicate these insights, I've found that AI help for graduation speech writing principles can be applied to create compelling narratives from conversation data. By structuring the insights with clear themes, supporting evidence, and emotional resonance, the resulting presentations become much more impactful.

The Technical Architecture of Conversational NLP Systems

Building effective conversational NLP systems requires a thoughtfully designed technical architecture. In my experience developing these systems, I've found that several key components must work together seamlessly.

Conversational NLP System Architecture

The technical components that power conversation analysis:

flowchart TD

A[Data Collection Layer] --> B[Preprocessing Pipeline]

B --> C[NLP Processing Engine]

C --> D[Analysis Layer]

D --> E[Visualization Engine]

subgraph "Data Collection"

A1[Chat Logs]

A2[Call Transcripts]

A3[Email Conversations]

A4[Social Media]

end

subgraph "Preprocessing"

B1[Tokenization]

B2[Normalization]

B3[Noise Removal]

B4[Sentence Segmentation]

end

subgraph "NLP Engine"

C1[Transformer Models]

C2[Word Embeddings]

C3[Named Entity Recognition]

C4[Sentiment Analysis]

end

subgraph "Analysis"

D1[Pattern Detection]

D2[Anomaly Detection]

D3[Trend Analysis]

D4[Insight Generation]

end

subgraph "Visualization"

E1[Interactive Dashboards]

E2[Flow Diagrams]

E3[Sentiment Maps]

E4[Topic Visualizations]

end

A1 & A2 & A3 & A4 --> A

B --> B1 & B2 & B3 & B4

C --> C1 & C2 & C3 & C4

D --> D1 & D2 & D3 & D4

E --> E1 & E2 & E3 & E4

style A fill:#FF8000,stroke:#FF8000,color:white

style E fill:#66BB6A,stroke:#66BB6A,color:white

Essential NLP Preprocessing

Effective conversation analysis begins with preprocessing—the often overlooked but critical foundation. In my systems, I implement several key preprocessing steps:

- Tokenization: Breaking text into words, phrases, or other meaningful elements

- Normalization: Converting text to consistent case, handling contractions, and standardizing formats

- Noise removal: Filtering out irrelevant elements like timestamps, system messages, or automated notifications

- Speaker diarization: Identifying and separating different speakers in conversation transcripts

- Context windowing: Creating appropriate context windows to maintain conversation coherence during analysis

Deep Learning Models for Conversation

The heart of modern conversation analysis lies in specialized deep learning models. I've found that different conversation types often require tailored approaches:

| Model Type | Best For | Limitations | Example Use Case |

|---|---|---|---|

| BERT-based Models | Understanding context in shorter conversations | Limited context window (512 tokens) | Customer support chat analysis |

| Longformer | Extended conversations with long-range dependencies | Computationally intensive | Meeting transcript analysis |

| DialogGPT | Analyzing conversational flow and coherence | May miss subtle context cues | Sales conversation analysis |

| BART | Summarization of conversation content | May lose specific details | Executive meeting summaries |

| T5 | Multi-task conversation analysis | Requires significant fine-tuning | Integrated conversation intelligence platform |

The Role of Transformer Architectures

Transformer architectures have revolutionized conversation analysis by capturing long-range dependencies and contextual relationships. Their self-attention mechanisms excel at understanding how different parts of a conversation relate to each other, even when separated by many turns.

In my implementations, I've found that transformer models pre-trained on conversational data perform significantly better than general language models. Fine-tuning these models on domain-specific conversations (such as customer support for a particular product) yields even better results.

Integration with Visualization Frameworks

One of the most challenging aspects of building conversational NLP systems is bridging the gap between analysis results and visualization frameworks. I've found that designing intermediate data structures that capture the essential patterns while abstracting away unnecessary complexity is key to effective integration.

PageOn.ai's Deep Search capability has been particularly valuable here, automatically finding relevant visual assets to represent conversation themes. This feature dramatically reduces the time needed to transform NLP insights into compelling visual narratives.

Ethical Considerations in Conversational Analysis

As I've developed and deployed conversational analysis systems, I've become increasingly mindful of the ethical considerations they raise. Conversations often contain sensitive information, personal details, and private expressions that require careful handling.

Privacy Concerns

Conversations often contain personally identifiable information (PII), health information, financial details, and other sensitive data. I implement several privacy-preserving approaches in my systems:

- Automated PII detection and redaction before analysis

- Aggregation techniques that preserve insights while removing individual identifiers

- Strict access controls for raw conversation data versus anonymized insights

- Data minimization principles that limit collection to what's necessary for analysis

- Retention policies that ensure data isn't kept longer than needed

Bias Detection and Mitigation

NLP models can inherit and amplify biases present in their training data. When analyzing conversations, these biases can lead to unfair or inaccurate conclusions about certain groups or topics. I take several steps to address this challenge:

Bias Mitigation Framework

A systematic approach to addressing bias in conversation analysis:

flowchart TD

A[Data Collection] --> B[Bias Audit]

B --> C{Bias Detected?}

C -->|Yes| D[Data Balancing]

C -->|No| E[Model Training]

D --> E

E --> F[Model Evaluation]

F --> G{Performance Disparities?}

G -->|Yes| H[Model Adjustment]

G -->|No| I[Deployment]

H --> F

I --> J[Ongoing Monitoring]

J --> K{New Bias Patterns?}

K -->|Yes| L[Model Update]

K -->|No| J

L --> J

style A fill:#FF8000,stroke:#FF8000,color:white

style J fill:#66BB6A,stroke:#66BB6A,color:white

Transparency in Analysis

I believe in maintaining transparency about how conversation data is analyzed and transformed into insights. This means clearly communicating:

- What data is being collected and analyzed

- How NLP models are interpreting the conversations

- The limitations and confidence levels of the analysis

- How insights derived from conversations will be used

Human Oversight

While automation enables scaling conversation analysis, I always incorporate human oversight into the process. This ensures that:

- Edge cases are properly handled

- Context-dependent interpretations are verified

- Potentially harmful or inappropriate conclusions are caught

- The system continues to align with organizational values and objectives

Regulatory Compliance

Conversation analysis must comply with relevant regulations such as GDPR, HIPAA, CCPA, and industry-specific requirements. My approach includes regular compliance audits, documentation of data handling practices, and built-in features that support compliance requirements like the right to access, right to be forgotten, and data portability.

Building an Effective Conversation Analysis Workflow

Based on my experience implementing conversation analysis systems across various organizations, I've developed a structured workflow that consistently delivers valuable insights. This approach balances technical sophistication with practical usability.

Conversation Analysis Workflow

A systematic process from data collection to actionable insights:

flowchart LR

A[Data Collection] --> B[Data Preparation]

B --> C[Model Selection]

C --> D[Analysis Execution]

D --> E[Insight Generation]

E --> F[Visualization]

F --> G[Decision Making]

G --> H[Implementation]

H --> I[Feedback Loop]

I --> A

style A fill:#FF8000,stroke:#FF8000,color:white

style I fill:#66BB6A,stroke:#66BB6A,color:white

Data Collection and Preparation

The foundation of effective conversation analysis is proper data collection and preparation. I recommend:

- Establishing consistent collection methods across all conversation channels

- Implementing proper metadata tagging (timestamps, participants, channels, etc.)

- Creating standardized preprocessing pipelines that handle different conversation formats

- Developing quality control measures to identify and address data issues

- Building privacy-preserving mechanisms into the collection process

Selecting Appropriate NLP Models

Different conversation types require different analytical approaches. When selecting models, I consider:

Model Selection Factors by Conversation Type

Integrating Analysis Results

For analysis results to drive action, they must be effectively integrated into decision-making processes. I recommend:

- Creating role-specific dashboards that highlight relevant insights for different stakeholders

- Establishing regular insight review sessions with cross-functional teams

- Developing clear protocols for escalating critical insights that require immediate action

- Building integration points with existing business intelligence and CRM systems

- Training teams on how to interpret and act on conversation insights

Creating Actionable Reports

The ultimate goal of conversation analysis is to drive action. I design reporting structures that:

- Highlight key findings clearly and concisely

- Provide appropriate context for interpreting results

- Include specific, actionable recommendations

- Incorporate trend analysis to show changes over time

- Allow drill-down into supporting evidence when needed

PageOn.ai's Vibe Creation feature has been transformative in this area. It helps transform technical NLP outputs into accessible visual stories that resonate with diverse stakeholders. By applying consistent visual themes and narrative structures, Vibe Creation ensures that insights are not just understood but also memorable and compelling.

Future Directions in Conversational Data Analysis

As I look to the horizon of conversational data analysis, I see several exciting developments that will transform how we extract and visualize insights from human conversations.

Multimodal Analysis

The future of conversation analysis will move beyond text to incorporate multiple communication channels simultaneously:

- Voice analysis: Detecting emotional states through tone, pace, volume, and vocal characteristics

- Visual cues: Analyzing facial expressions, gestures, and body language in video conversations

- Interaction patterns: Measuring response times, interruptions, and turn-taking behaviors

- Integrated understanding: Combining these signals for a more complete understanding of communication

Real-time Analysis

As processing capabilities continue to improve, we're moving toward real-time conversation analysis that enables immediate intervention:

- Live coaching for customer service representatives during difficult conversations

- Immediate detection of miscommunication or confusion in healthcare consultations

- Real-time meeting facilitation that identifies when topics are going off-track

- Dynamic adaptation of conversational AI systems based on user emotional states

Cross-language Conversation Analysis

Global organizations increasingly need to analyze conversations across multiple languages. Advances in multilingual NLP models are making it possible to:

- Compare customer sentiment across different regions and languages

- Identify universal versus culture-specific communication patterns

- Ensure consistent service quality regardless of language

- Detect regional-specific issues or opportunities

Generative AI for Insight Synthesis

Perhaps the most transformative development is the application of generative AI to conversation analysis. These systems can:

- Automatically generate comprehensive reports from conversation data

- Create visual representations that highlight key patterns and relationships

- Suggest potential actions based on identified conversation patterns

- Continuously learn from feedback to improve insight quality

PageOn.ai's agentic capabilities represent the cutting edge of this approach. By transforming conversational intent into polished visual narratives, these systems bridge the gap between raw conversation data and actionable insights. The ability to automatically generate visual presentations from conversation transcripts dramatically reduces the time from data to decision.

Projected Adoption of Advanced Conversation Analysis Technologies

Transform Your Conversational Data with PageOn.ai

Turn complex conversations into compelling visual stories that drive action and understanding.

Start Creating with PageOn.ai TodayBringing It All Together

As we've explored throughout this guide, the transformation of conversational data into visual insights represents one of the most powerful applications of natural language processing. From customer service optimization to healthcare improvements, from educational assessment to business intelligence, the ability to analyze conversations at scale unlocks tremendous value.

The journey from raw conversational data to actionable visual insights requires sophisticated NLP technologies, thoughtful system architecture, ethical considerations, and effective workflows. But when implemented well, these systems can reveal patterns and opportunities that would otherwise remain hidden in the vast sea of human communication.

As I look to the future, I'm excited by the potential of multimodal analysis, real-time processing, cross-language capabilities, and generative AI to further enhance our ability to learn from conversations. These technologies will continue to make insights more accessible, more actionable, and more impactful.

With tools like PageOn.ai that bridge the gap between complex NLP outputs and compelling visual narratives, organizations of all types can now transform their conversational data into strategic assets that drive better decisions, improve experiences, and create competitive advantage.

You Might Also Like

The AI-Powered Pitch Deck Revolution: A Three-Step Framework for Success

Discover the three-step process for creating compelling AI-powered pitch decks that captivate investors. Learn how to clarify your vision, structure your pitch, and refine for maximum impact.

The Art of Data Storytelling: Creating Infographics That Captivate and Inform

Discover how to transform complex data into visually compelling narratives through effective infographic design. Learn essential techniques for enhancing data storytelling with visual appeal.

The Art of Text Contrast: Transform Audience Engagement With Visual Hierarchy

Discover how strategic text contrast can guide audience attention, enhance information retention, and create more engaging content across presentations, videos, and marketing materials.

The Strategic GIF Guide: Creating Memorable Moments in Professional Presentations

Discover how to effectively use GIFs in professional presentations to create visual impact, enhance audience engagement, and communicate complex concepts more memorably.