AI Risk Assessment Matrix: Transforming Uncertainty into Visual Decision Clarity

Visualize complex AI risks for better decision-making

I've found that transforming abstract AI risks into structured visual frameworks is essential for effective decision-making. In this guide, I'll share how to create powerful risk assessment matrices that evaluate certainty and impact, helping you navigate the complex landscape of AI governance.

Understanding the AI Risk Assessment Landscape

When I first began working with AI systems, I quickly realized that traditional risk assessment approaches were insufficient. AI risks are multidimensional, often abstract, and can evolve rapidly. This is where visual AI risk assessment frameworks become essential tools for decision-makers.

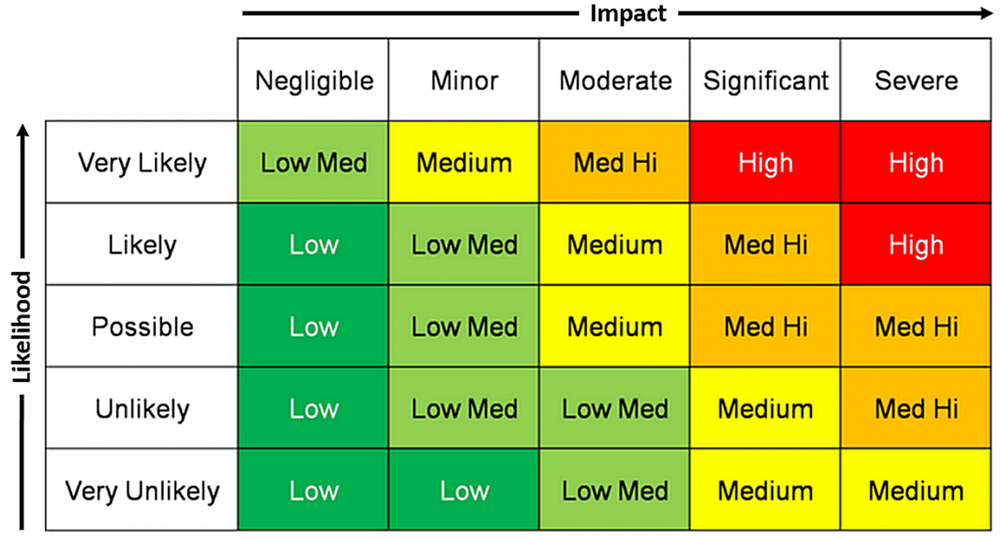

AI risk assessment matrices serve as visual frameworks that transform complex threats and opportunities into structured, actionable insights. These matrices allow organizations to map potential risks along axes of certainty and impact, creating a clear visual representation of where to focus resources and attention.

A visual representation of an AI risk assessment matrix showing the relationship between certainty and impact

The evolution from traditional risk assessment to AI-specific frameworks has been driven by the unique challenges of artificial intelligence systems:

- Unpredictable emergent behaviors in complex AI systems

- Difficulty in quantifying uncertainty in machine learning models

- Rapid technological advancement creating novel risk categories

- Interdisciplinary nature of AI impacts across technical, ethical, and social domains

I've found that visual representation is particularly valuable for complex AI risk scenarios because our brains process visual information more efficiently than text or numbers alone. When stakeholders can literally "see" where risks fall on a matrix, they can make more informed decisions and align on priorities more effectively.

Using PageOn.ai, I've been able to transform abstract risk concepts into clear visual matrices without requiring technical expertise. The platform allows me to quickly iterate on different matrix designs, customize them for specific AI applications, and share them with stakeholders in an accessible format.

Core Components of an Effective AI Risk Matrix

In my experience developing risk frameworks for AI systems, I've found that the most effective matrices are built around two fundamental axes: Certainty (likelihood) and Impact (severity). This creates a powerful visual structure that helps organizations prioritize their response to various AI risks.

Interactive AI Risk Matrix showing the four priority quadrants with sample risk points

Let me break down the quadrant structure and what each represents:

High Impact/High Certainty (Critical Priority)

Risks in this quadrant require immediate attention and mitigation strategies. These are the "known knowns" that pose significant threats to your AI systems or their applications.

High Impact/Low Certainty (Monitoring Priority)

These "known unknowns" have potentially severe consequences but uncertain likelihood. They require continuous monitoring and contingency planning.

Low Impact/High Certainty (Management Priority)

These risks are likely to occur but have limited consequences. They should be managed through standard procedures and efficiency improvements.

Low Impact/Low Certainty (Awareness Priority)

The "unknown unknowns" with limited potential impact. These should be periodically reassessed as AI systems evolve and more information becomes available.

I've found that creating customizable risk categories using PageOn.ai's AI Blocks allows organizations to tailor their matrices to specific needs. For example, a healthcare AI system might have specialized categories for patient safety risks, while a financial AI might focus on regulatory compliance and fraud detection.

Color-coding is another essential element of effective risk matrices. Our brains process color information rapidly, making it an intuitive way to signal priority levels. I typically recommend using warm colors (reds and oranges) for high-priority risks and cooler colors (blues and greens) for lower-priority ones, creating an intuitive visual hierarchy that stakeholders can grasp at a glance.

Building Your Decision-Making Framework

Creating an effective AI risk assessment matrix requires thoughtful approaches to quantifying both certainty and impact. I've developed several techniques that help organizations move from abstract concerns to concrete measurements.

Techniques for Quantifying Certainty

flowchart TD

A[Certainty Assessment] --> B[Historical Data Analysis]

A --> C[Expert Judgment]

A --> D[Simulation & Testing]

A --> E[Uncertainty Quantification Methods]

B --> B1[Failure rates]

B --> B2[Incident patterns]

C --> C1[Delphi method]

C --> C2[Structured elicitation]

D --> D1[Red teaming]

D --> D2[Adversarial testing]

E --> E1[Bayesian approaches]

E --> E2[Monte Carlo simulations]

When measuring potential impact, I recommend evaluating across multiple domains to capture the full spectrum of potential consequences:

Financial Implications

Quantify potential financial losses from system failures, including direct costs, opportunity costs, and recovery expenses.

Operational Disruption

Measure potential downtime, productivity losses, and disruption to dependent systems or processes.

Reputational Damage

Assess potential harm to brand trust, customer confidence, and public perception from AI failures or misuse.

Regulatory Compliance

Evaluate potential violations of existing or anticipated regulations, including fines and legal consequences.

Ethical Considerations

Consider impacts on fairness, transparency, privacy, and human autonomy that may not be captured in other dimensions.

I've found that using PageOn.ai's Deep Search functionality helps integrate relevant industry benchmarks and standards into risk assessments. This ensures that your matrices are informed by the latest research and best practices in AI safety and governance.

Creating dynamic matrices that adapt to emerging threats requires regular reassessment and updating of your framework. With PageOn.ai, I can quickly adjust visual representations as new information becomes available, ensuring that risk assessments remain current and actionable in a rapidly evolving AI landscape.

Implementation Strategies for Different AI Applications

In my experience, effective risk assessment requires tailoring your approach to specific AI applications. Each type of AI system presents unique risk profiles that must be addressed with specialized frameworks.

When implementing risk matrices for AI agents, I consider the development lifecycle stage and adjust the framework accordingly:

| AI System Type | Unique Risk Factors | Matrix Customization |

|---|---|---|

| Large Language Models (LLMs) |

|

Emphasize content safety metrics and output verification protocols |

| Computer Vision Systems |

|

Focus on testing across diverse conditions and privacy protection measures |

| Predictive Analytics Tools |

|

Include data provenance tracking and confidence interval visualization |

| Autonomous Decision Systems |

|

Incorporate human-in-the-loop checkpoints and progressive autonomy levels |

For AI development projects, I recommend focusing on technical risks during early stages, then expanding to include deployment and operational risks as the project matures. This progressive approach ensures that risk assessment remains relevant to the current development phase.

flowchart TD

A[AI Project Lifecycle] --> B[Research & Design]

A --> C[Development & Testing]

A --> D[Deployment]

A --> E[Operation & Monitoring]

B --> B1{Risk Focus: Concept Validation}

B1 --> B1a[Technical feasibility risks]

B1 --> B1b[Ethical design considerations]

C --> C1{Risk Focus: Implementation}

C1 --> C1a[Testing coverage]

C1 --> C1b[Performance boundaries]

C1 --> C1c[Security vulnerabilities]

D --> D1{Risk Focus: Integration}

D1 --> D1a[System compatibility]

D1 --> D1b[User adoption challenges]

D1 --> D1c[Initial performance monitoring]

E --> E1{Risk Focus: Ongoing Governance}

E1 --> E1a[Drift detection]

E1 --> E1b[Incident response]

E1 --> E1c[Continuous improvement]

AI project lifecycle stages and their associated risk assessment focus areas

Using PageOn.ai to visualize risk profiles across different implementation stages has transformed how I communicate with stakeholders. The platform's intuitive interface allows me to create dynamic visualizations that show how risk factors evolve throughout the AI lifecycle, helping teams anticipate and prepare for challenges before they arise.

I've found that secure AI agents require particularly careful risk assessment, as they often operate with varying degrees of autonomy. For these systems, I recommend creating specialized matrices that explicitly address the balance between agent capability and control mechanisms.

From Assessment to Action: Decision Protocols

Identifying risks is only the first step—what truly matters is how organizations respond to them. I've developed a systematic approach for translating risk assessments into concrete action plans.

flowchart TD

A[Risk Identified] --> B{Assess Quadrant}

B -->|High Impact/High Certainty| C[Critical Priority]

B -->|High Impact/Low Certainty| D[Monitoring Priority]

B -->|Low Impact/High Certainty| E[Management Priority]

B -->|Low Impact/Low Certainty| F[Awareness Priority]

C --> C1[Immediate Mitigation Required]

C1 --> C2[Assign Executive Sponsor]

C2 --> C3[Develop Action Plan]

C3 --> C4[Implement Controls]

C4 --> C5[Verify Effectiveness]

D --> D1[Establish Monitoring System]

D1 --> D2[Define Trigger Conditions]

D2 --> D3[Develop Contingency Plans]

D3 --> D4[Regular Reassessment]

E --> E1[Document Standard Procedures]

E1 --> E2[Assign Operational Ownership]

E2 --> E3[Implement Efficiency Measures]

E3 --> E4[Periodic Review]

F --> F1[Document in Risk Register]

F1 --> F2[Schedule Reassessment]

F2 --> F3[Monitor for Changes]

Decision protocol flowchart showing response paths for different risk quadrants

For high-impact scenarios, I recommend developing detailed visual decision trees that guide response teams through the necessary steps. These trees should include:

- Clear trigger conditions that initiate the response protocol

- Defined roles and responsibilities for each step

- Communication channels and escalation paths

- Decision points with explicit criteria

- Documentation requirements for actions taken

Establishing thresholds for escalation is critical for effective risk management. I typically recommend a tiered approach:

Designing feedback loops is essential for improving risk assessment accuracy over time. I recommend implementing:

Incident Analysis

Systematically review actual incidents against predicted risks to identify assessment gaps.

Near-Miss Reporting

Capture and analyze situations where risks nearly materialized but were averted.

Prediction Tracking

Record risk predictions and compare against actual outcomes to calibrate future assessments.

I've found that leveraging PageOn.ai's AI Blocks to build interconnected decision frameworks has significantly improved response effectiveness. The platform allows me to create visual representations of decision protocols that are both comprehensive and easy to follow, ensuring that teams can respond quickly and effectively when risks materialize.

By connecting your risk assessment matrix directly to action protocols, you create a seamless path from identification to resolution, dramatically improving your organization's ability to manage AI risks effectively.

Case Studies: Successful AI Risk Matrix Applications

Throughout my career working with AI risk assessment, I've seen organizations across various sectors successfully implement risk matrices to improve their AI governance. These real-world examples demonstrate how visual risk frameworks can transform abstract concerns into concrete action plans.

Financial Services: Algorithmic Trading Risk Prevention

A global investment firm implemented a specialized risk matrix for their algorithmic trading systems that focused on:

- Market volatility impact assessment

- Liquidity risk visualization

- Regulatory compliance monitoring

- System failure cascade analysis

Result:

The matrix helped identify a previously overlooked interaction between market volatility and algorithm behavior, allowing the firm to implement circuit breakers that prevented a potential $15M loss during an unusual market event.

Healthcare: Patient Safety in AI Diagnostic Tools

A hospital network developed a risk matrix for their AI diagnostic imaging system that emphasized:

- False negative risk stratification

- Patient demographic representation

- Clinician oversight integration

- Edge case identification

Result:

The matrix revealed underrepresentation of certain patient demographics in training data, leading to targeted data collection that improved diagnostic accuracy by 12% for previously underserved populations.

Manufacturing: Quality Control in AI-Powered Automation

An automotive components manufacturer implemented a risk matrix for their AI quality inspection system that focused on:

- Environmental condition variations

- Component variation detection

- System calibration drift

- False acceptance rate management

Result:

The matrix guided the development of a calibration protocol that reduced false acceptance rates by 87%, preventing potentially defective parts from reaching customers and avoiding costly recalls.

Public Sector: Ethical Considerations in Predictive Policing

A municipal police department created a risk matrix for their predictive policing system that emphasized:

- Bias detection and mitigation

- Community impact assessment

- Transparency mechanisms

- Human oversight requirements

Result:

The matrix led to the implementation of a community oversight board and transparent reporting system, increasing public trust while maintaining the effectiveness of crime prevention efforts.

Using PageOn.ai to craft visual narratives around these case studies has been invaluable for communicating complex risk scenarios effectively. The platform's intuitive interface allows me to create compelling visualizations that illustrate both the risk assessment process and the concrete outcomes achieved.

These case studies demonstrate that effective visual risk frameworks are not just theoretical exercises—they drive tangible improvements in AI system safety, performance, and trustworthiness across diverse applications and industries.

Future-Proofing Your AI Risk Assessment Framework

The AI landscape is evolving rapidly, with new capabilities, challenges, and regulatory requirements emerging constantly. I've developed several strategies to ensure that risk assessment frameworks remain relevant and effective over time.

Integrating Emerging Risk Factors

Adapting to evolving regulatory landscapes is essential for maintaining compliant AI systems. I recommend establishing a dedicated process for monitoring regulatory developments and incorporating them into your risk assessment framework. This should include:

flowchart TD

A[Regulatory Monitoring] --> B[Identify Relevant Jurisdictions]

A --> C[Track Legislative Developments]

A --> D[Monitor Enforcement Actions]

A --> E[Engage with Industry Groups]

B --> F[Regulatory Impact Analysis]

C --> F

D --> F

E --> F

F --> G[Gap Assessment]

G --> H[Framework Update]

H --> I[Documentation Update]

I --> J[Team Training]

J --> K[Compliance Verification]

Regulatory adaptation process for AI risk assessment frameworks

Creating scenario planning visualizations for potential AI developments helps organizations prepare for a range of possible futures. I typically recommend developing at least three scenarios:

Baseline Scenario

Extrapolation of current trends and technologies with moderate advancement rates and expected regulatory developments.

Acceleration Scenario

Rapid technological breakthroughs, increased capabilities, and potential disruption requiring agile adaptation.

Constraint Scenario

Increased regulatory restrictions, public concern, and technical limitations requiring robust compliance mechanisms.

Building organizational capacity for ongoing risk assessment is perhaps the most important aspect of future-proofing. This involves:

- Developing internal expertise across technical, ethical, and regulatory dimensions

- Creating cross-functional risk assessment teams

- Establishing regular review cycles and clear ownership

- Implementing knowledge management systems to preserve insights

- Fostering a culture that values proactive risk identification

I've found that using PageOn.ai's agentic capabilities transforms risk management intentions into visual framework for AI safety that evolve with organizational needs. The platform's ability to quickly generate and iterate on visualizations makes it easier to keep risk frameworks current and relevant as the AI landscape changes.

By implementing these future-proofing strategies, organizations can ensure that their AI risk assessment frameworks remain effective tools for navigating uncertainty and making confident decisions in a rapidly evolving technological landscape.

Practical Implementation Guide

Getting Started

When I implement AI risk assessment matrices with organizations, I follow a structured approach that ensures comprehensive coverage while remaining practical and actionable.

Essential Tools for Creating Your First AI Risk Assessment Matrix

Collaborative Visualization Platform

Use a tool like PageOn.ai that allows multiple stakeholders to contribute to and interact with risk visualizations.

Risk Register Template

Create a standardized format for documenting identified risks, their assessment, and mitigation strategies.

Assessment Rubric

Develop clear criteria for evaluating certainty and impact levels to ensure consistency across assessments.

Documentation System

Implement a system for capturing the rationale behind risk assessments and decisions made.

Data Collection Methodologies

flowchart TD

A[Data Collection Methods] --> B[Expert Consultation]

A --> C[Historical Incident Analysis]

A --> D[Threat Modeling]

A --> E[Literature Review]

A --> F[User/Stakeholder Feedback]

B --> B1[Structured interviews]

B --> B2[Delphi panels]

C --> C1[Internal incident database]

C --> C2[Industry reports]

D --> D1[Attack tree analysis]

D --> D2[STRIDE methodology]

E --> E1[Academic research]

E --> E2[Industry standards]

F --> F1[User testing]

F --> F2[Feedback channels]

Data collection methods for comprehensive AI risk assessment

Stakeholder Engagement Strategies

I've found that comprehensive risk identification requires input from diverse perspectives. Effective stakeholder engagement strategies include:

- Cross-functional workshops with technical, business, legal, and ethical representatives

- Structured risk identification exercises using techniques like SWIFT (Structured What-If Technique)

- Regular review sessions to capture emerging concerns and insights

- Anonymous input channels to surface concerns that might otherwise remain hidden

- External expert consultation for specialized domains or novel applications

Using PageOn.ai to rapidly prototype different matrix configurations has transformed my stakeholder engagement process. The platform allows me to:

- Quickly visualize stakeholder input in real-time during workshops

- Create alternative matrix designs to compare different approaches

- Generate interactive visualizations that stakeholders can explore independently

- Easily update visualizations as new insights emerge

Continuous Improvement

Effective risk assessment frameworks are never static—they must evolve with your organization and the AI landscape. I recommend implementing a structured approach to continuous improvement.

Scheduled Review Protocols

I recommend implementing a multi-layered review schedule:

- Quarterly review of high-priority risks

- Semi-annual comprehensive framework assessment

- Annual deep-dive reassessment

Triggers for Reassessment

Beyond scheduled reviews, specific events should trigger immediate reassessment:

- Significant AI system changes or upgrades

- Relevant incident (internal or external)

- New regulatory requirements

- Major changes in use context or scale

Documentation Best Practices

Thorough documentation is essential for tracking risk evolution and demonstrating due diligence. Key documentation practices include:

- Version control for risk matrices and assessment criteria

- Decision logs capturing the rationale for risk evaluations

- Evidence collection supporting certainty and impact assessments

- Action tracking for mitigation measures

- Regular snapshots of the risk landscape for trend analysis

I've found that leveraging PageOn.ai's visual capabilities to track changes in risk profiles over time provides invaluable insights. The platform allows me to create visual timelines showing how specific risks have evolved, helping organizations identify trends and patterns that might otherwise remain hidden.

Conclusion: Mastering Uncertainty Through Visual Clarity

Throughout this guide, I've shared my approach to transforming abstract AI risks into structured visual frameworks that drive better decision-making. As AI systems become increasingly integrated into critical operations across industries, the ability to effectively assess and manage risks becomes a significant competitive advantage.

Organizations that implement structured AI risk assessment matrices gain several key benefits:

- Enhanced decision-making through clear prioritization of risks

- Improved resource allocation focused on the most critical concerns

- Better stakeholder alignment around risk management priorities

- Increased organizational confidence in AI implementation

- Stronger regulatory compliance and governance

Building a culture of thoughtful AI risk management requires more than just tools and frameworks—it demands ongoing commitment to identifying, assessing, and addressing potential issues before they materialize. By making risk assessment visual and accessible, organizations can engage stakeholders across technical and non-technical roles, creating shared ownership of AI governance.

The journey from uncertainty to clarity isn't a one-time exercise but an ongoing process of refinement and adaptation. As AI capabilities continue to advance, so too must our approaches to understanding and managing the associated risks. Visual risk assessment matrices provide a powerful foundation for this journey, enabling organizations to navigate complexity with confidence.

PageOn.ai transforms abstract risk concepts into actionable visual frameworks that drive better decision-making. By leveraging its intuitive interface and powerful visualization capabilities, you can create risk assessment matrices that evolve with your needs, helping your organization navigate the complex landscape of AI governance with confidence and clarity.

Transform Your Visual Expressions with PageOn.ai

Ready to create powerful AI risk assessment matrices that drive better decision-making? PageOn.ai's intuitive platform makes it easy to transform complex risk concepts into clear visual frameworks—no technical expertise required.

Start Creating with PageOn.ai TodayYou Might Also Like

Mastering Visual Harmony: The Art and Science of Cohesive Slide Layouts

Discover how to create visually harmonious slide layouts through color theory, typography, and spatial design. Learn professional techniques to elevate your presentations with PageOn.ai.

Revolutionizing Slide Deck Creation: How AI Tools Transform Presentation Workflows

Discover how AI-driven tools are transforming slide deck creation, saving time, enhancing visual communication, and streamlining collaborative workflows for more impactful presentations.

Building New Slides from Prompts in Seconds | AI-Powered Presentation Creation

Discover how to create professional presentations instantly using AI prompts. Learn techniques for crafting perfect prompts that generate stunning slides without design skills.

Mastering Content Rewriting: How Gemini's Smart Editing Transforms Your Workflow

Discover how to streamline content rewriting with Gemini's smart editing capabilities. Learn effective prompts, advanced techniques, and workflow optimization for maximum impact.